[ad_1]

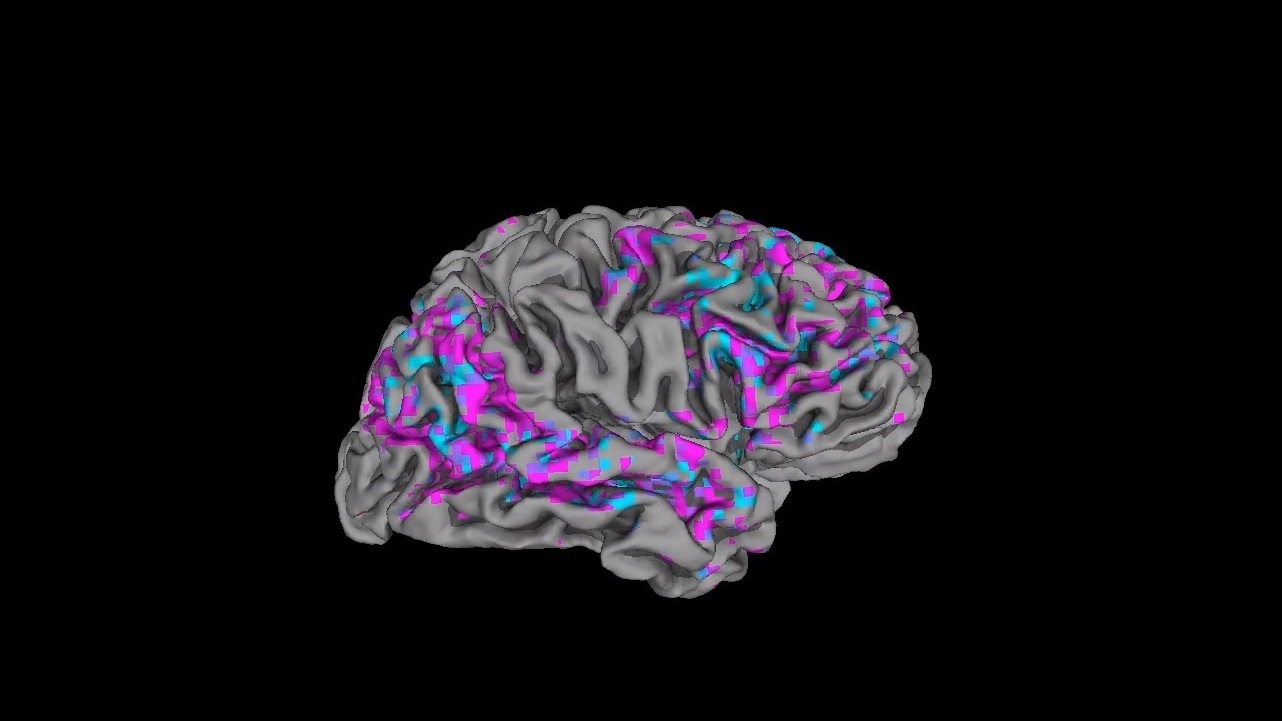

This movie nonetheless demonstrates a see of one person’s cerebral cortex. Pink areas have previously mentioned-ordinary action blue areas have under-regular action.

Jerry Tang and Alexander Huth

hide caption

toggle caption

Jerry Tang and Alexander Huth

This video however reveals a perspective of one person’s cerebral cortex. Pink regions have previously mentioned-average action blue areas have underneath-typical action.

Jerry Tang and Alexander Huth

Experts have found a way to decode a stream of words and phrases in the brain utilizing MRI scans and synthetic intelligence.

The procedure reconstructs the gist of what a particular person hears or imagines, alternatively than making an attempt to replicate just about every word, a team experiences in the journal Mother nature Neuroscience.

“It truly is finding at the ideas behind the text, the semantics, the meaning,” suggests Alexander Huth, an creator of the study and an assistant professor of neuroscience and computer system science at The University of Texas at Austin.

This technologies can not browse minds, although. It only will work when a participant is actively cooperating with researchers.

However, systems that decode language could sometime assist folks who are not able to converse for the reason that of a mind harm or disorder. They also are aiding scientists understand how the brain processes words and views.

Preceding initiatives to decode language have relied on sensors put directly on the surface of the mind. The sensors detect signals in locations associated in articulating words and phrases.

But the Texas team’s solution is an endeavor to “decode additional freeform believed,” claims Marcel Just, a professor of psychology at Carnegie Mellon University who was not associated in the new investigate.

That could indicate it has programs outside of communication, he claims.

“One of the major scientific healthcare troubles is understanding mental ailment, which is a brain dysfunction in the long run,” Just claims. “I believe that this basic form of strategy is likely to solve that puzzle someday.”

Podcasts in the MRI

The new study came about as section of an work to understand how the brain procedures language.

Scientists had a few individuals devote up to 16 hrs every in a functional MRI scanner, which detects indicators of activity across the brain.

Contributors wore headphones that streamed audio from podcasts. “For the most portion, they just lay there and listened to stories from The Moth Radio Hour, Huth states.

Those people streams of words and phrases developed exercise all more than the mind, not just in spots affiliated with speech and language.

“It turns out that a big total of the brain is accomplishing something,” Huth states. “So regions that we use for navigation, locations that we use for undertaking psychological math, locations that we use for processing what points truly feel like to touch.”

Soon after participants listened to hrs of tales in the scanner, the MRI information was sent to a laptop. It learned to match precise designs of brain action with specific streams of words and phrases.

Next, the group had individuals hear to new tales in the scanner. Then the laptop or computer attempted to reconstruct these stories from just about every participant’s mind action.

The program obtained a large amount of assist setting up intelligible sentences from synthetic intelligence: an early model of the well-known organic language processing software ChatGPT.

What emerged from the system was a paraphrased model of what a participant read.

So if a participant read the phrase, “I failed to even have my driver’s license yet,” the decoded edition could be, “she hadn’t even learned to push yet,” Huth suggests. In quite a few cases, he suggests, the decoded variation contained problems.

In one more experiment, the technique was ready to paraphrase words a individual just imagined declaring.

In a 3rd experiment, members watched video clips that explained to a tale without applying words and phrases.

“We didn’t inform the subjects to test to explain what is taking place,” Huth says. “And however what we bought was this form of language description of what is actually heading on in the movie.”

A noninvasive window on language

The MRI approach is presently slower and fewer precise than an experimental communication procedure remaining designed for paralyzed men and women by a workforce led by Dr. Edward Chang at the College of California, San Francisco.

“Men and women get a sheet of electrical sensors implanted right on the surface of the mind,” says David Moses, a researcher in Chang’s lab. “That records brain activity truly near to the source.”

The sensors detect exercise in brain parts that normally give speech instructions. At minimum one particular particular person has been in a position to use the system to precisely create 15 words and phrases a moment applying only his thoughts.

But with an MRI-based mostly technique, “No a person has to get surgical treatment,” Moses claims.

Neither strategy can be made use of to read a person’s thoughts with out their cooperation. In the Texas review, people ended up capable to defeat the technique just by telling on their own a various story.

But long term variations could elevate ethical concerns .

“This is extremely enjoyable, but it is also a tiny scary, Huth claims. “What if you can read out the phrase that anyone is just contemplating in their head? Which is likely a hazardous point.”

Moses agrees.

“This is all about the person owning a new way of communicating, a new tool that is fully in their management,” he suggests. “That is the aim and we have to make positive that stays the intention.”

[ad_2]

Supply url